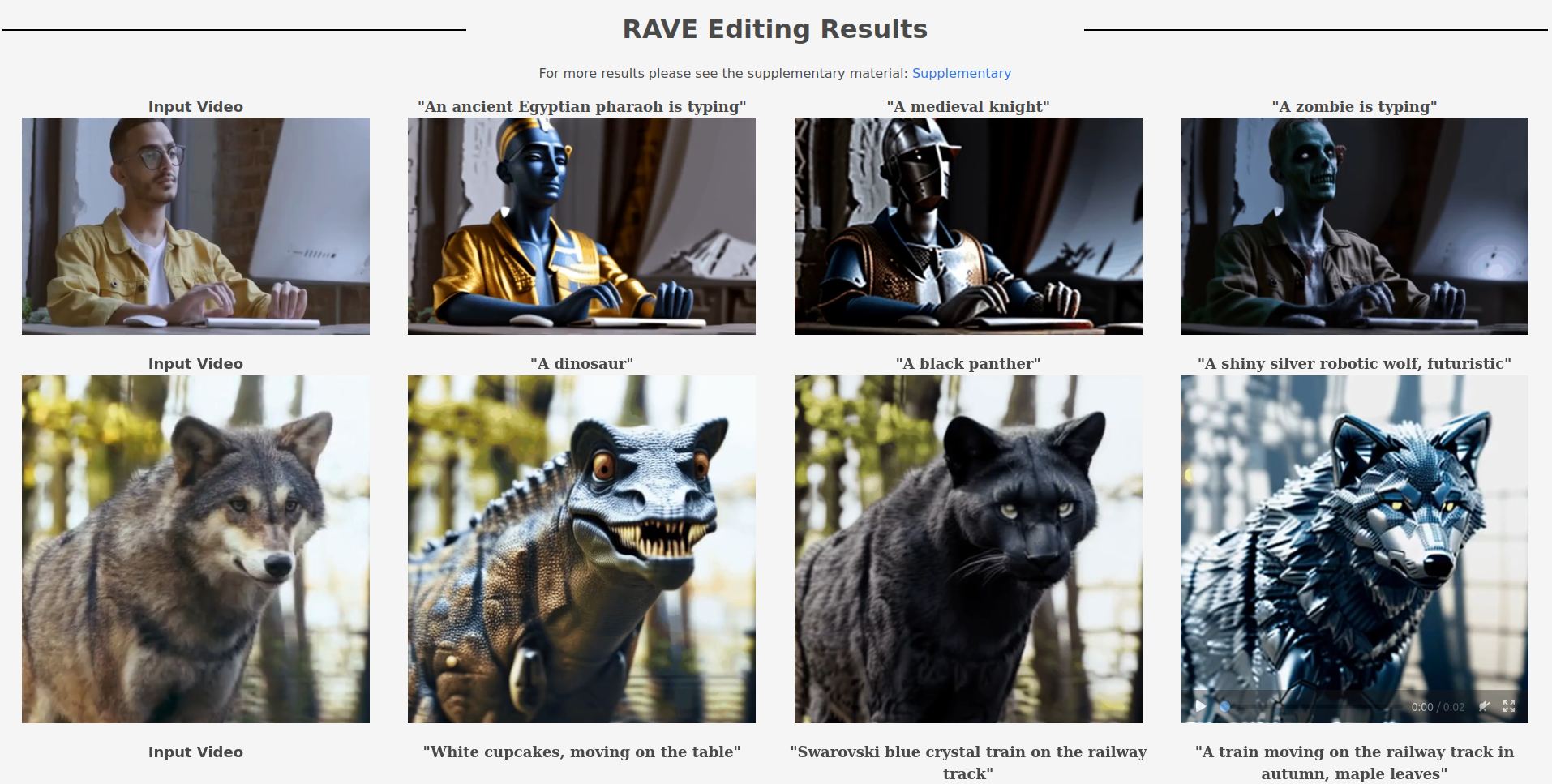

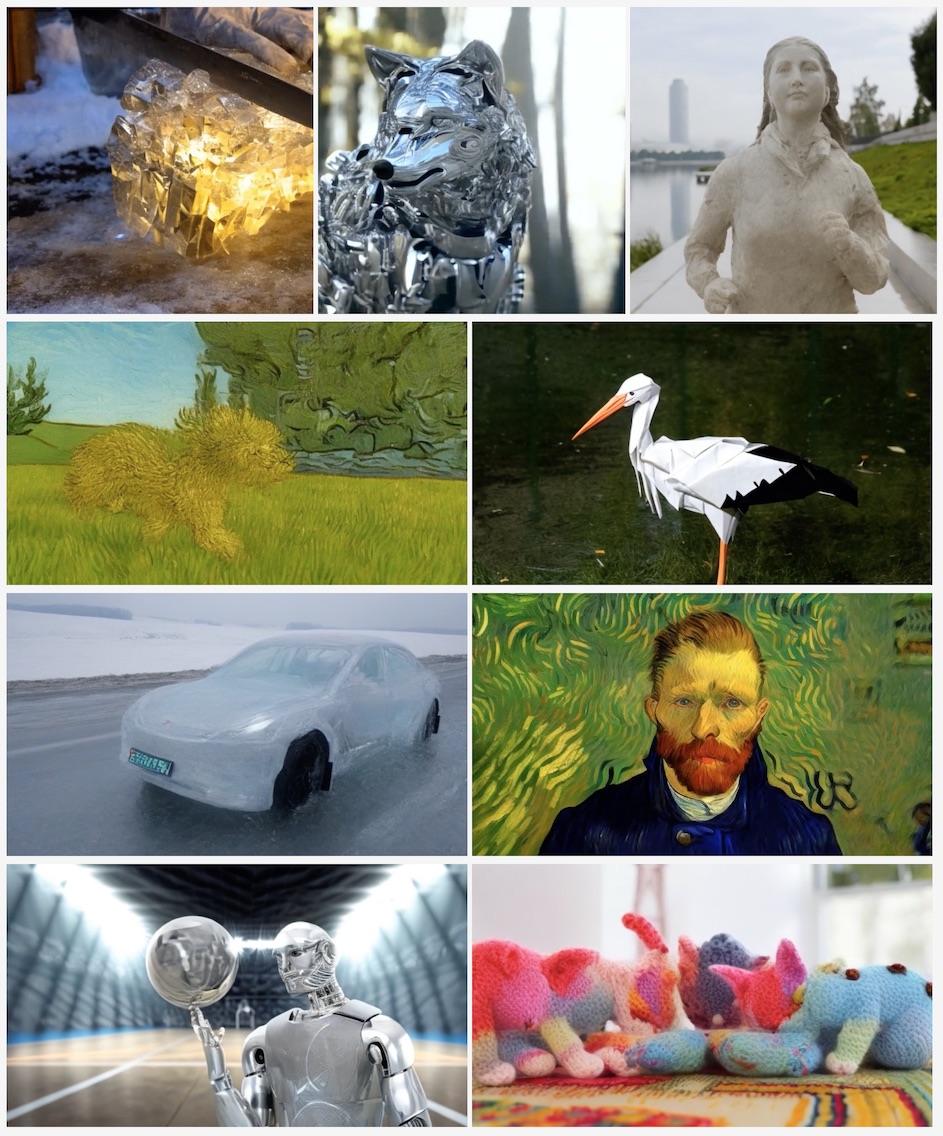

RAVE: Randomized Noise Shuffling for Fast and Consistent Video Editing with Diffusion Modelshttps://rave-video.github.io/

RAVE introduces a pioneering zero-shot video editing approach, leveraging pre-trained text-to-image diffusion models to achieve high-quality results with advanced user control. Addressing the lag in visual quality and user manipulation seen in video editing models, RAVE employs a novel noise shuffling strategy, exploiting spatio-temporal interactions between frames for faster and temporally consistent video production. Notably efficient in memory usage, RAVE handles longer videos while accommodating a broad spectrum of edits, from local attribute adjustments to shape transformations. The method's versatility is demonstrated through a comprehensive video evaluation dataset spanning diverse scenes, including object-focused, human activities, and dynamic scenarios. Rigorous quantitative and qualitative experiments underscore RAVE's superior performance in diverse video editing scenarios compared to existing methods, marking a substantial advancement in the realm of video editing with diffusion models.

(199)

(199)