QLoRA: Efficient Finetuning of Quantized LLMshttps://github.com/artidoro/qlora

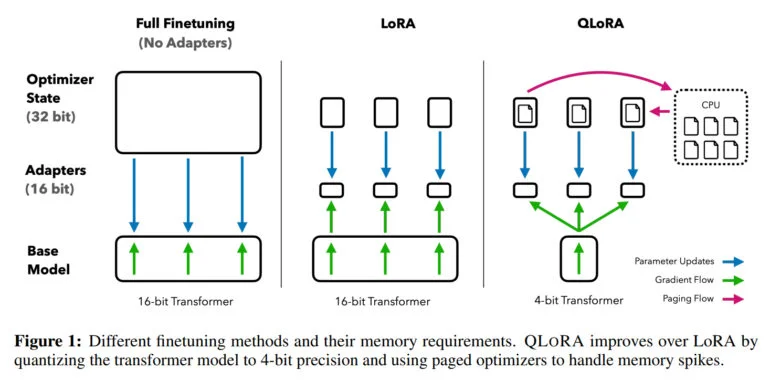

QLoRA allows fine-tuning of large language models on a single GPU. Using this method, they trained Guanaco, a family of chatbots based on Meta's LLaMA models, achieving over 99% of ChatGPT's performance. QLoRA reduces the memory requirement by quantizing models to 4 bits and adding low-rank adaptive weights. The team found that data quality is more important than quantity for fine-tuning, with models trained on OpenAssistant data performing better. Even the smallest Guanaco model outperformed other models, and the team believes that QLoRA will make fine-tuning more accessible, bridging the resource gap between large corporations and small teams. They also see potential for private models on mobile devices, enabling privacy-preserving fine-tuning on smartphones.

(197)

(197)