MiniGPT-4: Enhancing Vision-language Understanding with Advanced Large Language Modelshttps://github.com/Vision-CAIR/MiniGPT-4

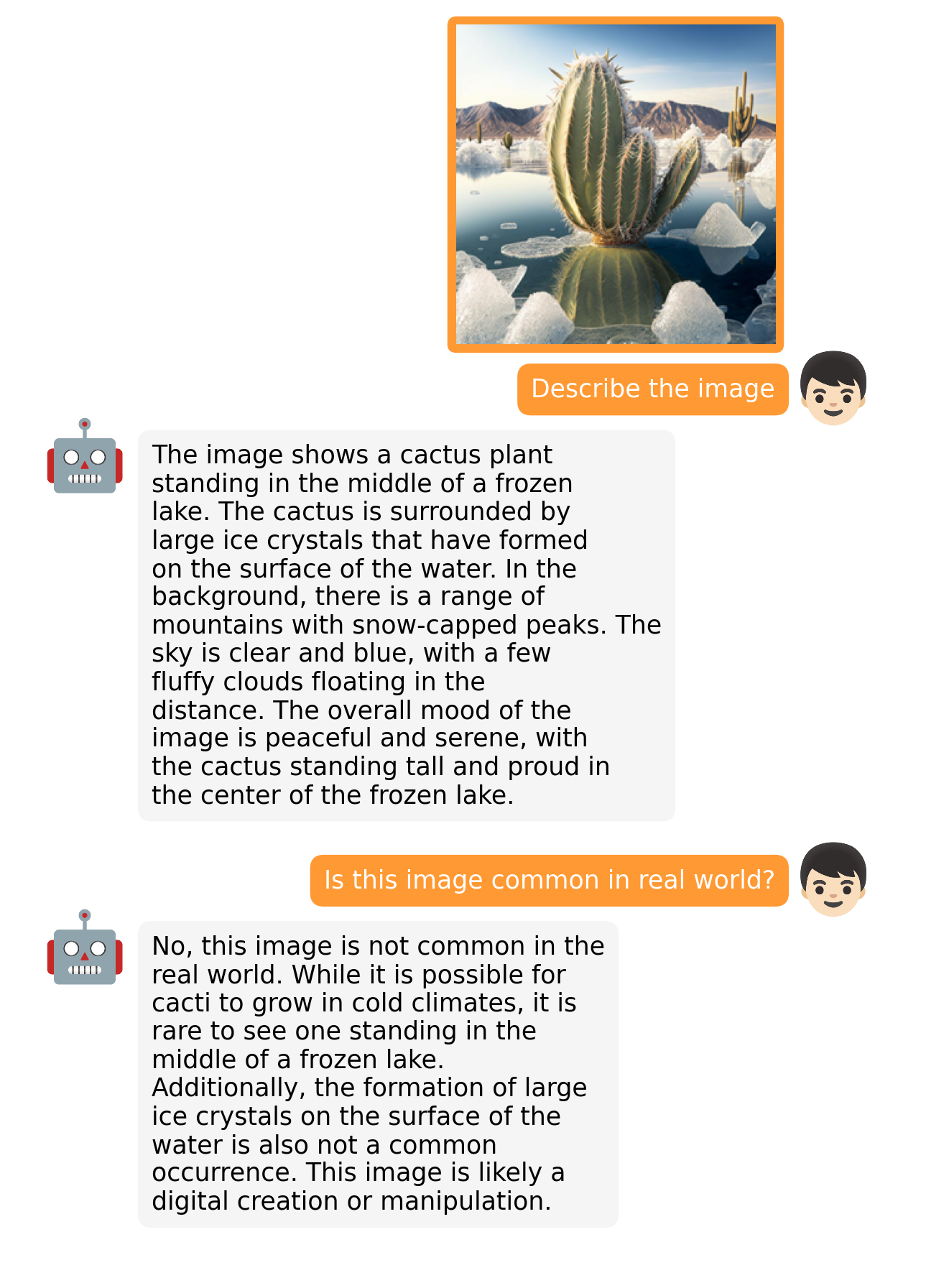

MiniGPT-4, a vision-language model that aligns a frozen visual encoder with a frozen large language model (LLM) using one projection layer. The authors trained MiniGPT-4 using a two-stage process, with the first stage using 5 million aligned image-text pairs for traditional pretraining. To address generation issues, they proposed a novel approach using a small, high-quality dataset and ChatGPT to create high-quality image-text pairs. The second stage involved finetuning the model on this dataset using a conversation template to improve generation reliability and overall usability. The results show that MiniGPT-4 processes capabilities similar to GPT-4, such as detailed image description generation and website creation from handwritten drafts, as well as other emerging capabilities, like writing stories and poems based on images and teaching users how to cook with food photos. The method is computationally efficient and highlights the potential of advanced large language models for vision-language understanding.

(199)

(199)