CoDi: Any-to-Any Generation via Composable Diffusionhttps://github.com/microsoft/i-Code/tree/main/i-Code-V3

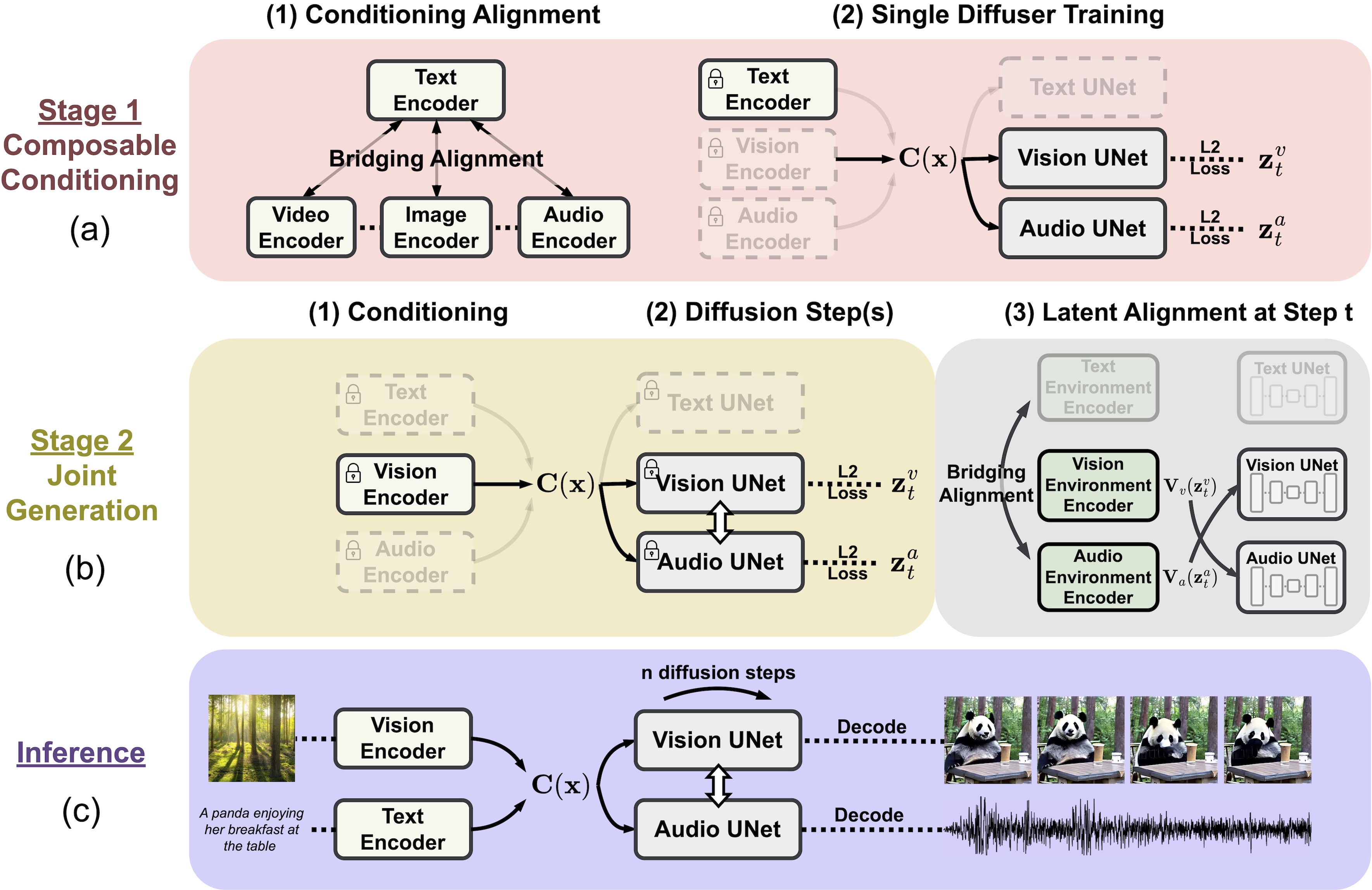

Composable Diffusion (CoDi) is a new generative model that can create different types of outputs (like language, images, videos, or audio) from various inputs. It can generate multiple outputs at the same time and is not limited to specific types of inputs. Even without specific training data, CoDi aligns inputs and outputs to generate any combination of modalities. It uses a unique strategy to create a shared multimodal space, allowing synchronized generation of intertwined modalities.

(199)

(199)